Scraping with Python

in the Newsroom

Day One - GIJC 2015, Lillehammer

By Tom Meagher / @ultracasual

and Tommy Kaas / @tbkaas

Why should you code?

Cover more ground, faster

Helps document reporting

Makes analysis replicable

Automation!

We won't learn everything

Git or Github

pip or virtualenvs

Frameworks

Journalism > "Development"

This will not be nuanced, idiomatic Python.

Some programmers may be saddened by this code.

But you know what? If it works, and it works on deadline, that's what matters for us today.

The goal

To start thinking about

how to break problems down

into the smallest tasks

that can be programmed.

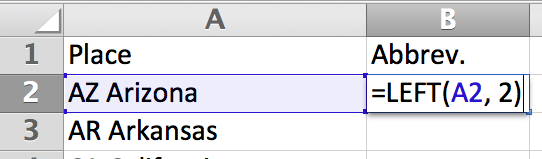

If you know Excel

You can learn to program.

- "AZ Arizona" is a string.

- "A2" is a variable.

- "=left()" is a function.

- A2 and 2 are function arguments.

Why Python?

Easy to learn.

Explicit.

Mature and well-documented.

Strong PythonJournos community of support.

Prep your workspace

To set up your machine, you'll need to have Python 2.7, pip, virtualenv and virtualenvwrapper installed.

#create and activate a sandbox to work in

mkvirtualenv gijc15

#clone the code repo from Github

git clone git@github.com:tommeagher/pythonGIJC15.git

#install the dependencies: requests, beautifulsoup4, unicodecsv

pip install -r requirements.txt

#launch the interactive interpreter

ipython

Strings

Strings are ordered sequences of characters wrapped in quotes.

var1 = "This class is at GIJC in Lillehammer."

var2 = "&You!_123 Four"

If you want to cheat, the answers are here.

Integers and Floats

Numbers that you can do math on.

Integers are whole numbers.

Floats are decimals.

Lists

An ordered collection of objects, wrapped in brackets.

my_list = [1, 2, "Liberty Bell"]

Dicts

A collection of named keys and their associated values, wrapped in curly braces.

my_dict = {'Fruit': 'Orange', 'Weight': 10}

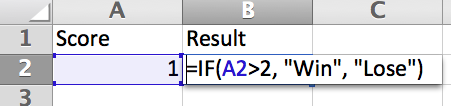

Conditionals

Logic that can trigger other operations,

similar to Excel's if function.

In Python:

score = 1

if score > 2:

print "Win"

else:

print "Lose"

Intermission

For more practice with the basics,

try this tutorial from PyCAR, or this one.

Hour Two

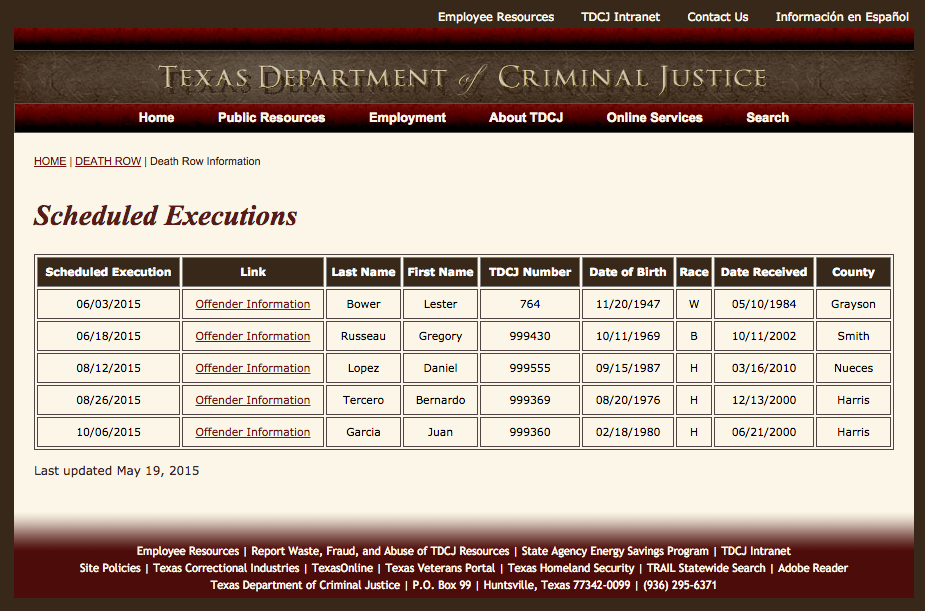

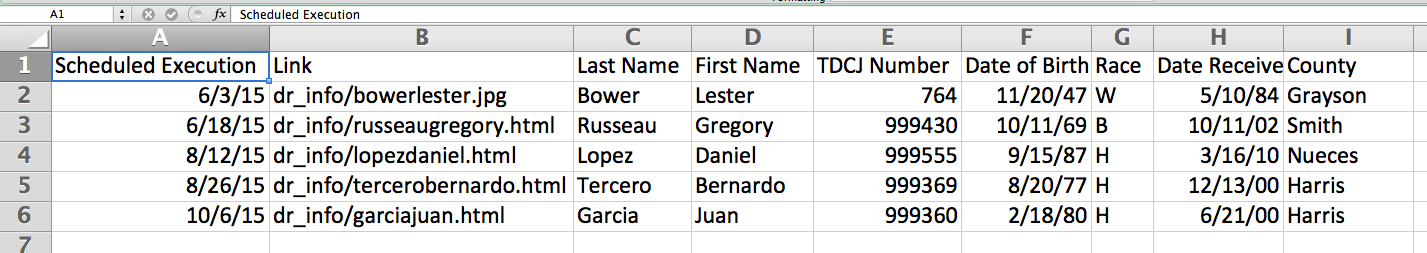

The Problem

How to go from this...

...to this?

The Reporting Phase

- Find a website

- Before you do anything else, ask for it.

- If that doesn't work...

- Does it look like a data table?

- "View Source"

- Is there a table tag?

- Does it follow a predictable pattern, like

body >> div >> table >> tr >> td ?

The Writing Phase

- Make an http request to the site

- Collect the text content of the response.

- Parse the text and step through it, tag by tag

- Find a tag, assign its content to a variable

- Store those variables in lists or dicts

- Make any additional requests to other pages and repeat above.

- Loop through the collected list or dict and write it to a file

Let's go

Open your text editor of choice and a terminal window.

Write a line or two of code under each comment,

save the text file and then try to run:

python scrape1.py

Hiatus

Keep learning

Excellent post on ethics of scraping

More resources for learning Python

Github, StackOverflow, Google

--30--

Clone the source code

Check out day two's exercises.